There's a principle we follow at Replicant that sounds simple at first, but changes everything about how we build AI agents:

Don't let AI make a decision you don't need AI for.

AI is remarkable at understanding intent, empathizing with frustrated customers, and holding natural conversations. This makes it a great solution for automating straightforward, tier-1 requests.

But as enterprises seek to automate higher tier requests with AI, the complexity of business workflows increases exponentially, demanding more than just conversational ability. They demand correctness, speed, and reliability, all things that AI alone isn't built to guarantee.

Our approach: guide AI intelligence with deterministic execution.

How deterministic execution makes AI Agents reliable when things get complex

Deterministic steps provide structure, sequencing, and guardrails. AI can then operate within that structure, bringing the conversation to life with understanding, empathy, and natural language.

And neither works as well alone. AI without structure leads to attention drifts. Structure without AI sounds robotic. But together, they deliver reliable outcomes with natural, engaging conversations.

To show what this looks like in practice, we'll walk through a single scenario: a lost package claim. A customer calls because their package never arrived. The AI Agent needs to look up the order, check eligibility, and apply business rules (waiting periods, cutoff dates, etc.).

It also needs to offer resolution options in a specific order, starting with reshipping and then refunding. If anything fails along the way, the system must recover gracefully.

This is exactly the kind of workflow where deterministic execution gives AI the structure it needs to perform at its best.

Avoiding the "wall of text" problem

There are two ways to build this lost package workflow. The difference between them explains why we bet on deterministic execution.

Approach A: give AI a big instruction manual

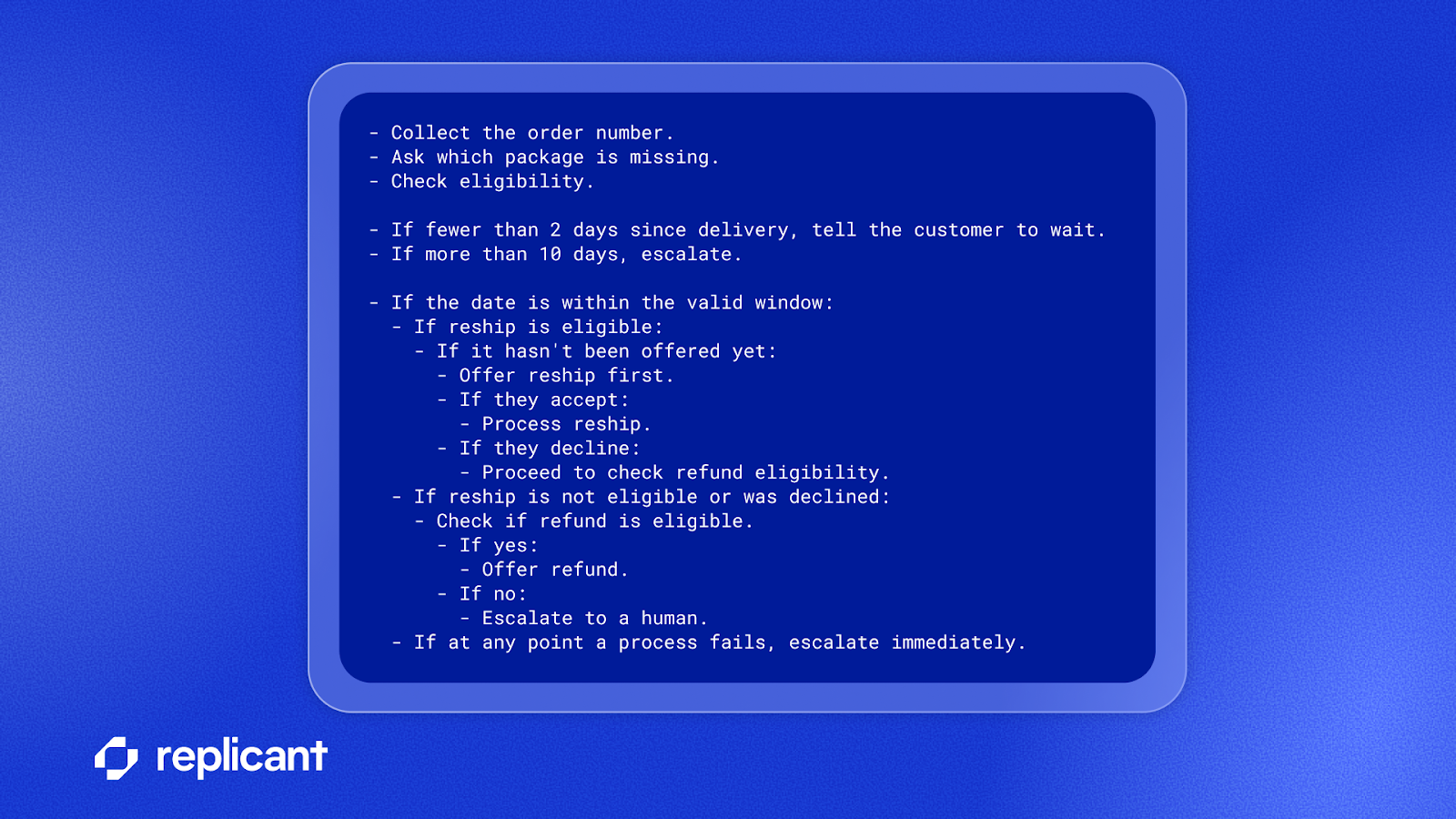

Approach A is to write out every step in prose and tell the AI exactly what to do:

This instruction block can easily grow into a massive wall of text as you add more custom condition checks and specific business rules. As your AI Agent gets tested and handles real traffic, new edge cases and rules will inevitably be added, making this block even longer and harder to follow. Nevertheless, this approach asks AI to follow it perfectly, every time, across every conversation turn.

The problems compound fast:

- The AI will forget steps. A massive instruction block is a cognitive burden even for the best models. They skip steps, apply rules out of order, or forget business constraints mid-conversation. On the tenth turn of a complex call, the model's attention often drifts from a rule buried deep in the instructions.

- Side effects multiply. Generic rules like telling the AI "don't offer to escalate unless the customer asks" get buried under 15 other rules. Attention drifts again, and it starts offering escalations unprompted.

- Latency increases. The AI must reason through the entire instruction block on every turn, even when only one rule is relevant. More instructions mean slower responses.

- Auditability is zero. When the AI Agent does the wrong thing, you can't trace which rule it failed on. You only know the AI "didn't follow instructions."

Approach B: move the right rules out of AI's hands

Not every business rule needs to be handled deterministically. AI is perfectly capable of deciding things like how to phrase an offer or responding empathetically to a frustrated customer. The question is: for each rule, what's the cost of getting it wrong?

Consider three questions:

- How critical is a given rule? Processing a claim that's outside the eligibility window has real financial consequences. It must be done right 100% of the time. But what about the sequence in which two resolution options are presented, like offering reship before refund? That's a business preference. If the AI occasionally offers a refund first, the customer still gets a valid resolution. Is 99% accuracy good enough?

- Can it be enforced automatically? Some rules have a clean trigger: a number, a flag, a result from an action. If the system can see that the delivery was one day ago, it can block the claim without involving the AI at all. Other rules are fuzzier and can fit more naturally in the AI's domain.

- What's the hidden cost of keeping it in instructions? This consideration gets overlooked a lot. Even if 99% accuracy is acceptable for a single rule, every rule left in the instruction block is in direct competition for the AI's attention. Pull one rule out, and you don't just make that rule more reliable, you make every remaining rule more reliable too, because the AI has less to juggle.

That third point changes the calculus. Take the reship-before-refund sequencing: maybe 99% accuracy is "fine." But if it's one of 15 rules in the instruction block, every rule becomes slightly less likely to be followed.

If you can enforce it deterministically with a simple condition (and you can), why leave it to chance? You're not just guaranteeing the sequencing. You're freeing up the AI's attention for the things that actually need its intelligence. The AI's instructions can now be shrunk to just a few lines:

Business rules like the 2-day wait period, the 10-day cutoff, the reship-before-refund sequencing, and the automatic transfer on failure, all move into deterministic logic that runs outside the AI.

The result:

- The AI's mental load drops dramatically, focusing on what it's good at: understanding the customer and having a natural conversation.

- Rules that must be right 100% of the time are guaranteed and run as predefined logic, not as suggestions the AI might forget.

- Rules that could be handled by the AI can be offloaded if they're easy to enforce and free up attention for everything else.

- Adding a new resolution option is easier, requiring one new step instead of an edit of a massive instruction wall with the hope the AI notices.

A massive instruction block is a wish list. Deterministic logic is a guarantee.

Pre-defining business logic with deterministic workflow chaining

When one step in a workflow completes, the next step should trigger automatically with conditional branching and without going back to the AI.

In our lost package scenario, the system doesn't ask the AI, "What should I do next?" after the eligibility check returns. Instead, deterministic logic takes over:

This isn't a simple linear chain. It's a decision tree that runs automatically. Look at what's happening:

- The sequencing is guaranteed. Reship is always offered before a refund. The AI can't accidentally offer a refund first, no matter how the conversation unfolds.

- State is tracked by the system, not the AI. The "already offered" flags are managed automatically. In the instruction-only version, the AI must remember every offer it’s made across multiple conversation turns. Eventually, it will forget.

- Multi-step sequences fire instantly. When processing a reship, the system automatically confirms the action, sets the resolution type, and records the outcome.

- The AI only does what it's good at. At each junction, the AI's job is narrow and well-suited to its strengths: present the offer naturally and understand whether the customer accepts or declines.

- Context isolation. The AI only knows what it needs to know, when it needs to know it. If the customer accepts the reship, the logic for handling refunds is irrelevant. In the instruction-only version, the AI holds every possible path in its head simultaneously, increasing the chance it confuses rules from different workflows. In the deterministic version, once the reship path is chosen, the refund instructions effectively disappear. The AI can't confuse policies for a workflow it isn't currently in.

- Focused instructions. Because deterministic logic handles the branching, instructions are progressively revealed as the conversation moves forward. When the customer reaches the reship offer, the AI receives a fresh instruction about presenting that offer. It arrives prominent and recent, not buried in paragraph five of a massive, static rulebook. Fresh instructions get followed. Stale ones get overlooked.

In the instruction-only version, the AI must simultaneously track which offers have been made, enforce the reship-before-refund sequencing, decide what to do after each outcome, and remember all of this across multiple conversation turns. That's four jobs piled on top of actually talking to the customer.

With deterministic chaining, the AI still follows instructions, but instead of memorizing an entire manual upfront, it receives one step at a time, like a guided tutorial. Each instruction is short, specific, and arrives at exactly the right moment. This plays to the AI's strengths: it's naturally great at conversation, understanding nuance, and adapting to the customer.

What it's not great at is reliably enforcing a long checklist of business rules across a multi-turn conversation. Deterministic chaining keeps the AI in its comfort zone and lets predefined logic handle the rest.

The AI handles the conversation. The code handles the workflow.

Removing ambiguity with reactive business rules

Some business rules shouldn't wait for the AI to remember them. They should kick in the instant the relevant data arrives.

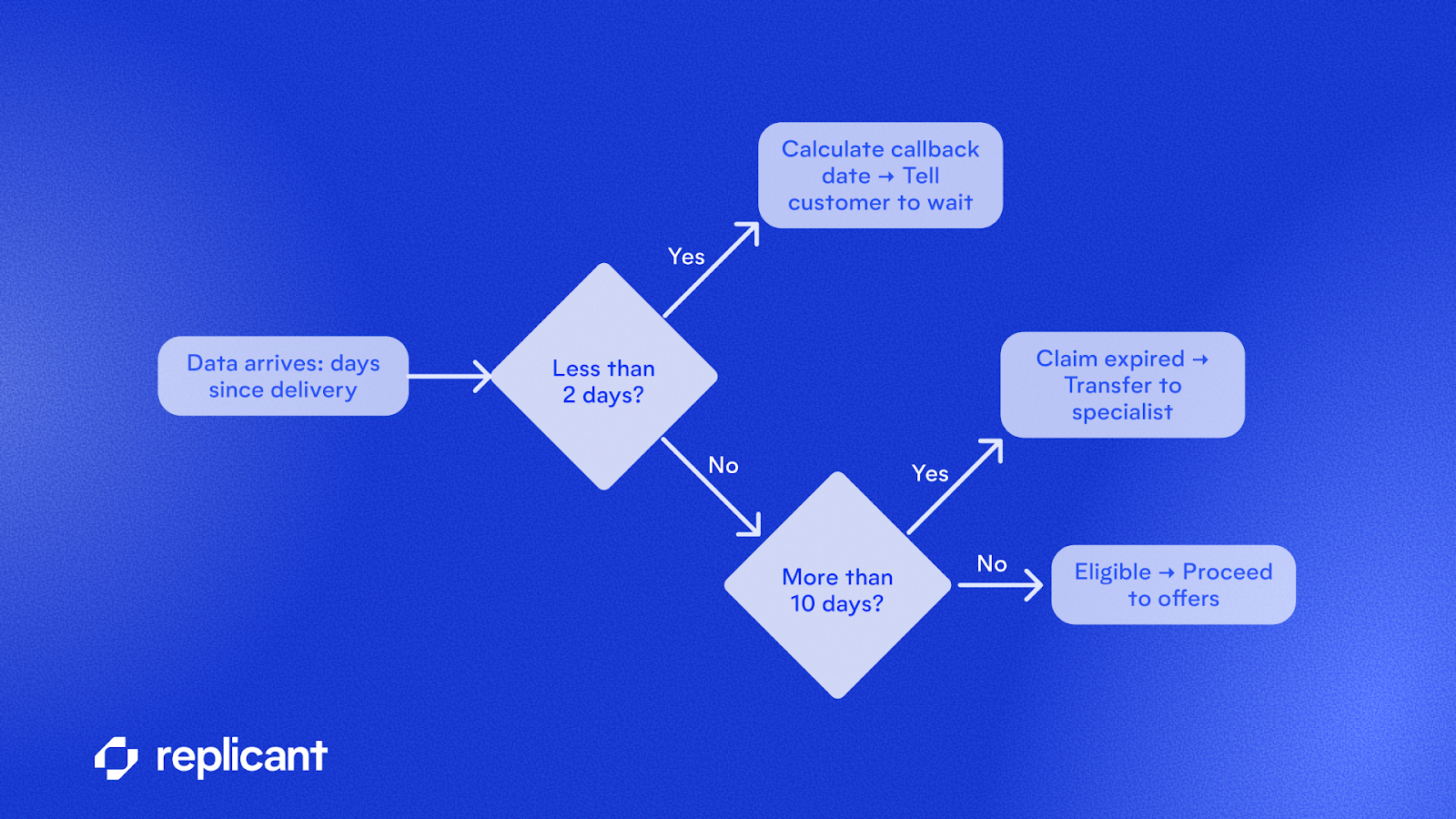

In our lost package scenario, the eligibility check returns a number: the number of days since the package was delivered. The moment that number arrives, deterministic logic evaluates it:

These gates kick in the instant data arrives, requiring no AI processing or instruction parsing.

- Less than 2 days? The system tells the customer they must wait until two full days after delivery has passed, calculates the exact callback date, and stops the workflow. The AI never gets a chance to proceed with an ineligible claim.

- More than 10 days? The claim is outside the window. The system automatically transfers to a specialist.

- Between 2 and 10 days? The workflow proceeds to resolution offers.

These aren't suggestions to the AI. They're hard gates. The 2-day and 10-day rules kick in the instant the data is available with zero ambiguity. This is exactly the kind of rule where 99% accuracy isn't good enough. Processing a claim outside the eligibility window has real financial and compliance consequences. It needs to be right every single time.

In the instruction-only version, these rules are buried in paragraph three of a massive instruction block. The AI must find and apply them correctly every time. And because they share the instruction block with fifteen other rules about offers, refunds, and escalation, the AI's attention is divided. Sometimes it applies the 2-day rule. Sometimes it doesn't.

This reactive approach extends far beyond time windows. Any business rule that depends on data arriving mid-conversation can kick in the instant the triggering data appears. This includes compliance disclosures triggered by the caller's location, routing decisions triggered by account type, and escalation thresholds triggered by error counts.

Business rules are defined once and executed every time: instantly, reliably, and without adding to the AI's mental load.

Deterministic error recovery: a fail-safe for edge cases

Every step in a real-world workflow can fail. Systems go down, payments time out, lookups return errors. The question isn't if something will fail, but whether your AI Agent will know what to do when it does.

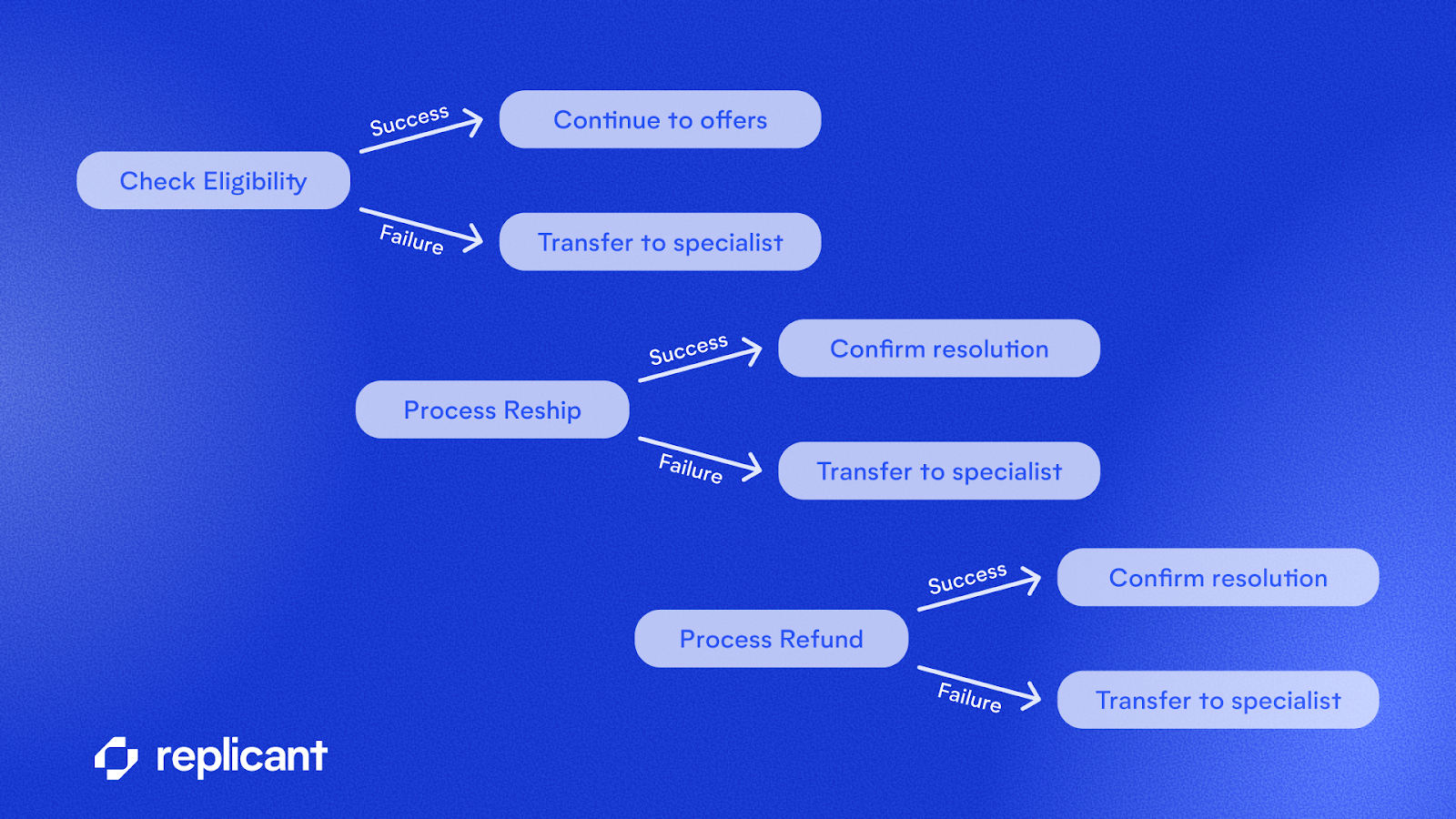

In our lost package workflow, there are three points where conversations can stray from the happy path:

With deterministic error recovery, every step has a success path and a failure path that is predefined. In other words, the AI is never asked to improvise when something breaks:

1. If the eligibility check fails because the lookup system is down or returns an error… The system doesn't tell the AI "the eligibility check failed, figure out what to do." It automatically transfers the caller to a specialist who can handle the case. Instantly. Every time. The customer never hears "the system is having trouble" followed by an awkward pause while the AI figures out what to say next.

2. If reship processing fails due to a payment timeout or an inventory issue… The system automatically transfers to a specialist. The AI never learns that the reship failed, so it can't blurt out "sorry, your reship failed" and leave the customer wondering “what now.”

3. If all offers are exhausted because the customer declines everything available… The system automatically transfers to a human rather than letting the AI flounder with "I'm not sure what else I can do" when the chain reaches the end and no resolution is accepted.

In the instruction-only version, the AI learns about every failure directly. It sees "reship failed" and must now decide what to tell the customer. Maybe it says "sorry, your reship couldn't be processed," revealing an internal failure the customer didn't need to know about.

Maybe it improvises: "let me try something else." Or maybe it just stalls.

With deterministic error recovery, we never put AI in that position to fail in the first place. The transfer happens immediately; the customer is seamlessly handed to a live agent who can help.

Beyond this single workflow, the platform also tracks cumulative errors across the entire conversation. If a conversation accumulates too many total failures, even across different steps, the system can automatically offer to transfer to a live agent.

It recognizes that the customer experience is degrading and, instead of keeping the caller trapped in a loop, proactively offers an escape hatch to a live agent. This is a hard safety net, not an AI judgment call, that prevents any caller from ever being trapped in an infinite error loop.

Real-world systems fail. The question is whether your AI Agent knows what to do when it happens.

Putting it all together

Without deterministic execution, complex tier-2 requests—like a lost package claim—relies heavily on chance. AI must navigate a massive instruction manual, execute perfect compliance across every conversation turn, and try its best to handle every failure correctly.

With deterministic execution, enterprises can scale automation to more complex use cases without increasing risk. The AI's job shrinks to a few lines: collect the order info and talk to the customer. Predefined logic handles the chain, the failures, and the rules.

For businesses, the decision isn't whether to "make everything deterministic." It's about asking, for each rule: how critical is it, can it be enforced automatically, and what's the cost of keeping it in the AI's instructions?

Some rules must be right 100% of the time; those are non-negotiable. Others might be fine at 99%. But if they're easy to enforce deterministically, pulling them out frees up the AI's attention for everything else.

These three patterns share a common theme: the AI Agent is intelligent where it should be and deterministic where it needs to be.

- Workflow chaining keeps multi-step processes on track. Predefined logic guarantees ordering, tracks state, and chains decisions instantly.

- Reactive business rules are the nervous system. They kick in the instant a condition is met, keeping the agent compliant in realtime without adding to the AI's mental load.

- Error recovery is the safety net. Every step has a predefined fallback. The AI never improvises on failure, and no customer is ever left hanging.

At Replicant, we don’t let AI make a decision that we don't need AI for. We don't let it figure out what to do when things go wrong, or how to sequence steps that must be in a specific order, or when a business rule needs to kick in the instant a condition is met. That's what deterministic execution is for.

As a result, our AI Agents sound natural and empathetic because our AI is focused almost entirely on conversation. And we’re trusted at scale because we execute complex business logic with the reliability of predefined rules.

No forgotten steps. No improvised error handling. No missed business rules. And a leaner instruction set that makes our AI better at the things that are actually enhanced by its intelligence.

Schedule time with an expert to learn more about how Replicant can transform your contact center with AI.

.svg)