Enterprise-grade safeguards for production AI

As AI Agents move from pilot to production, the tolerance for error drops to zero. What’s acceptable in experimentation is unacceptable on your front line.

Replicant’s Hallucination Checker automatically detects and prevents inaccurate or unverifiable responses across all bots, before they reach consumers, giving enterprises the confidence to scale AI without increasing risk.

The challenge: Accuracy at scale

One incorrect claim, like a misquoted policy, a fabricated fee explanation, or an outdated return window, isn’t just a bad answer. It creates:

- Brand risk

- Compliance exposure

- Escalations to live agents

- Erosion of trust in your automation program

As AI handles more complex customer conversations, hallucinations shift from theoretical risk to measurable operational impact. The question becomes whether AI Agents can be trusted on your front line.

What’s new: Real-time and historical hallucination detection

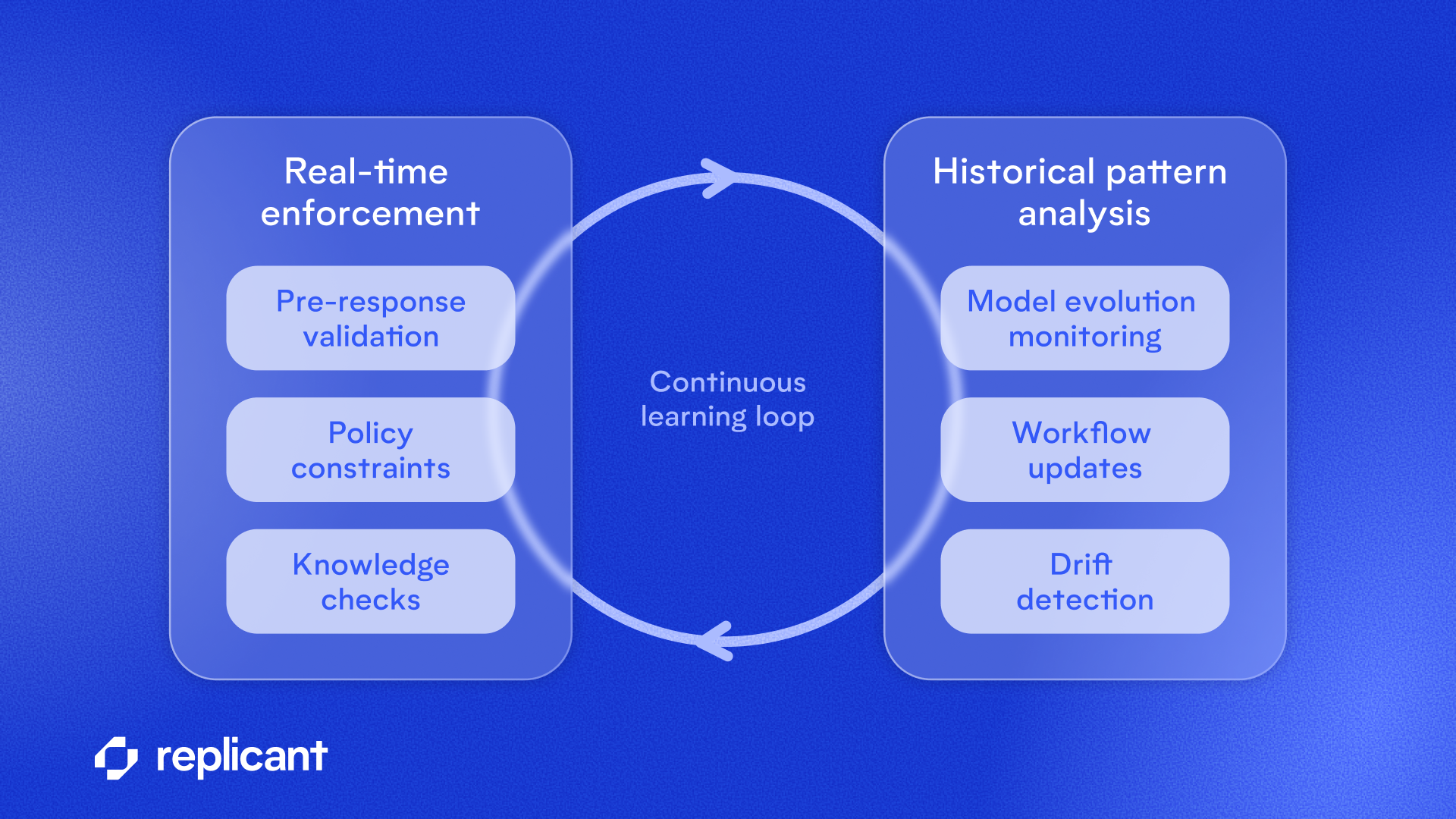

Replicant’s Hallucination Checker adds an automated safeguard layer across all Replicant AI agents, so behavior stays consistent and protection improves over time, without manual tuning. It works in two ways:

- Real-time enforcement. Responses are evaluated before they reach the customer. Responses are validated against approved knowledge sources, conversation context and system expectations before delivery. Inaccurate or unverifiable outputs are intercepted and corrected in real time.

- Historical pattern analysis. Past conversations are continuously analyzed to identify emerging hallucination patterns, including subtle drift tied to policy updates, model changes, or newly added or updated workflows.

Hallucination Checker flags issues for review and automated remediation, ensuring that as your AI footprint expands, the safeguards scale with it.

Why it matters: Preventing decay in production AI

One of the most common failure points in enterprise AI is model decay. Business rules change. Policies evolve. Knowledge bases update. Without continuous validation, AI agents drift and small inaccuracies compound over time. AI requires a different operating model.

Hallucination Checker is designed to prevent this drift. By combining real-time validation with historical analysis, Replicant ensures AI performance doesn’t degrade as automation scales. For technical leaders, this means:

- Fewer production rollbacks

- Reduced reliance on manual workloads or tunning

- Lower escalation rates tied to incorrect responses

- Greater confidence expanding automation to new use cases

Built on 1B+ minutes of production AI

Hallucination Checker reflects lessons learned from operating over 1 billion agent minutes in production across complex contact centers. It is part of Replicant’s broader self-improving AI architecture - including AI agent guardrails - designed to test, validate, and refine performance as automation scales.

Ready to see it in action? Connect with our team to explore how to expand your automation with confidence.

.svg)